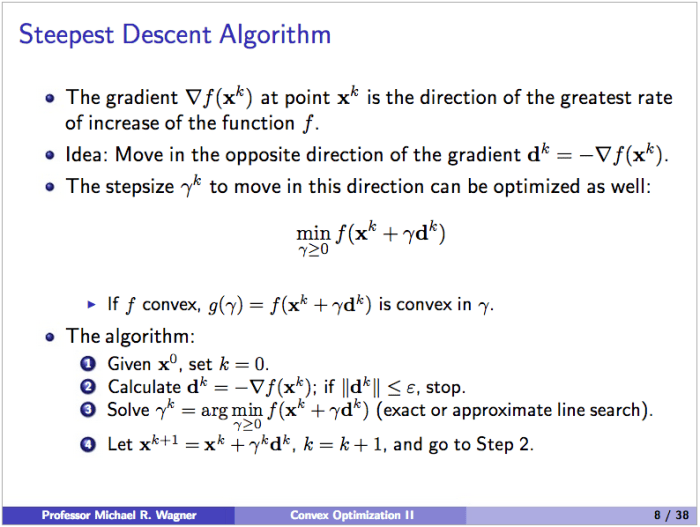

As a homework assignment in a PhD quantitative methods course I took for fun this past quarter – which turned out to be very difficult – we were asked to implement the steepest decent algorithm. This algorithm provides a numeric method to find the minimum of an unconstrained optimization problem. I choose to implement the algorithm in R.

The idea of the algorithm is the following:

Given a particular function it is possible to find a closed form expression for gamma by simply taking the derivative and setting it equal to zero. We were meant only to solve the algorithm for a specific function and associated gamma, but I wrote a more robust R function. It takes an generic function and gamma, an initial location on the function to start the decent, and an epsilon to use as a stopping condition (by changing epsilon we can get an arbitrarily accurate solution).

steepestDecent <- function(x.initial, func = NULL, gamma = NULL, epsilon) { # Implement error handling if(is.null(func) || is.null(gamma)) { stop("Error: Please enter both a function and gamma.") } require(numDeriv) xk <- x.initial k <- 1 xk.vector <- data.frame(k,func(xk)) result <- try(dk <- (-1)*grad(func,xk)) if(class(result) == "try-error") { stop("Error: Make sure dimensions of function and x.star match.") } dk.norm <- norm(dk,'2') if(dk.norm <= epsilon) { message(sprintf(paste( "Error: Starting location is within epsilon tolerance.", "Function value at (%s,%s) is %s.", "Direction norm is %s.", sep=" "), xk[1],xk[2],func(xk),dk.norm)) stop } while(dk.norm > epsilon) { dk <- (-1)*grad(func,xk) dk.norm <- norm(dk,'2') gamma <- gamma(xk,dk) xk <- xk + gamma*dk k <- k + 1 current.value <- data.frame(k,func(xk)) xk.vector <- rbind(xk.vector,current.value) } ratio.vector <- NULL index <- NULL func.x.star <- func(xk) for(i in 1:(length(xk.vector[[1]]) - 1)) { try(ratio.vector <- rbind(ratio.vector,((xk.vector[[i+1,2]] - func.x.star)/ (xk.vector[[i,2]] - func.x.star)))) if(class(result) == "try-error") {stop} index <- rbind(index,i) } ratio.plot <- plot(index[,1] + 1,ratio.vector[,1], main="A Plot of Convergence Ratio vs. K", xlab="K", ylab="Convergence Ratio") func.plot <- plot(xk.vector$k,xk.vector$func.xk., main="A plot of f(x^k) as a function of k", xlab="K Values", ylab="Function Values") numeric.solution <- sprintf( "The numerical approximation of the unconstrained minimum for this function is %s, %s.", round(xk[1]), round(xk[2])) print(xk.vector) return(numeric.solution) }

The function and gamma can be defined separately depending on the problem. We were told to use the following function and gamma:

My output for the given function and gamma are shown below:

> x.initial <- c(0,0) > epsilon <- .01 > steepestDecent(x.initial,func,gamma,epsilon) [1] "The numerical approximation of the unconstrained minimum for this function is 10, -1."

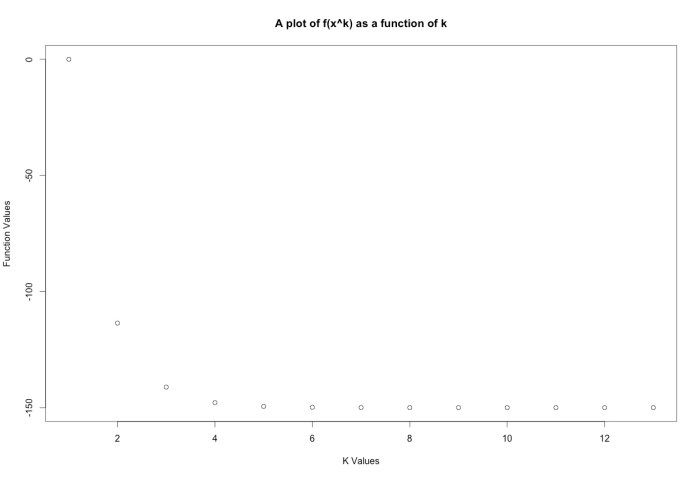

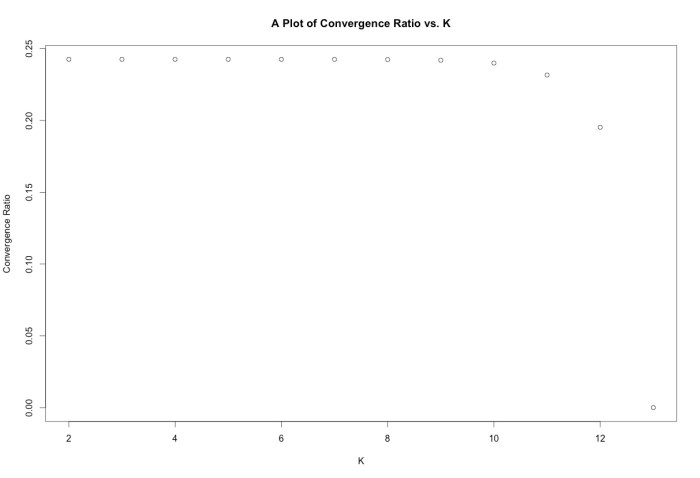

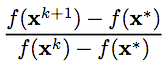

You can see the numerical solution matches the analytical solution found by the standard partial derivative method. The R function also outputs a plot of f(x) as a function of the iteration number as well as a plot of the convergence ratio, given by:

The plots for this function are shown below:

The plots for this function are shown below:

For fun I also created a simple function that uses the necessary and sufficient conditions to check if an x* derived from analytical procedures is a global minimum. The first condition to be a global minimum is that the gradient must be equal to zero. The second fact is that the Hessian matrix of a convex function must be positive semidefinite for all x.

However, if the Hessian can only be verified to be positive semidefinite for a ball of diameter 2*epsilon centered around a given x*, we can only be certain that x* is a local minimum.

On the other hand if the Hessian is not positive semidefinite we aren’t sure of much since we don’t know anything about the shape of the function. Finally, if the gradient is not zero we know for certain that we are not at a minimum since there is some direction we can travel that will lead to a lower f(x).

analyticTest <- function(func, x.star, convex = FALSE) { require(numDeriv) result <- try(hess <- hessian(func,x.star)) if(class(result) == "try-error") { stop("Error: Make sure dimensions of function and x.star match.") } result <- try(grad <- round(grad(func,x.star),5)) if(class(result) == "try-error") { stop("Error: Make sure dimensions of function and x.star match.") } if(!isTRUE(all(eigen(hess)[[1]]>0))) { print("Hessian matrix not semi-definite. x* not a local or global minimum.") print(1) return(FALSE) } else if(!isTRUE(all(grad == 0))) { print("Gradient at x star is not zero. x* not a local or global minimum.") print(2) return(FALSE) } else if(convex == TRUE) { print("x* is a global minimum.") print(3) return(TRUE) } else { print("x* may be a local or global minimum. Check convexity of function.") print(4) return(FALSE) } }