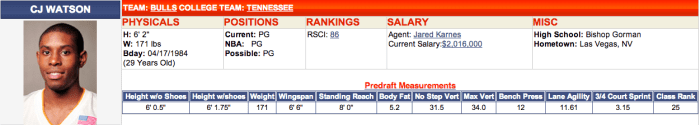

Another piece of information I wanted to have for my basketball analysis are player’s body measurements and skills test results. These measurements are taken at the pre-draft combine where players are put through a series of simple drills such as a non-step vertical leap test. For instance, here are C.J. Watson’s measurements.

In my first iteration of the project I simply downloaded the data from Draft Express’s main measurement page (Draft Express is the only site I know of that keeps this data). The problem is that many players are listed more than once with varying amounts of NA’s in each entry. Trying to combine these entries to get the most complete record is quite difficult and causes problems when merging the data.

For this round of data gathering I wanted to make the process easier so I thought I would go to each player’s page directly, where the most complete record of the measurements is held. I had the same problem here as scraping the RealGM site: each player also has a unique ID number that I needed to search for. Luckily, Draft Express keeps all 3,000 player measurement records on a single page. Clicking on a player’s name on this page takes you to their player page. This means that somewhere in the page source was a link to their player page that could be easily be scraped by iteratively searching for the first portion of the player’s page link, which is fixed.

I was happy to find that stringr’s str_extract() function works on vectors, which means in this case I could download the player measurement page source content one time and use a single function to extract all of the unique player ids. It was much easier than having to use a for() loop.

# Load libraries library(RCurl) library(stringr) library(data.table) # Transform player names to search format players <- as.character(players.DF$Player) players <- gsub(" ","-",players) players <- gsub("'","-",players) # Get page source to search url <- "www.draftexpress.com/nba-pre-draft-measurements/?year=All&sort2=DESC&draft=&pos=&source=All&sort=1" content <- getURLContent(url) # Search for players links <- str_extract(content,paste0('/profile/',players,'-[0-9]+')) # Concatenate links links[which(!is.na(links))] <- paste0('http://www.draftexpress.com', links[which(!is.na(links))],'/') # Cleanup dataframe links <- as.data.frame(links) setnames(links,c("players","links"),c("Players","Links")) # Save file write.csv(links, file="~/Desktop/NBA Project/Player Measurments/Player Links.csv")

This leads to a large number of NA’s for players, a problem in my original analysis as well. I’m not sure how Draft Express collects the measurement data, but it seems that data is simply not available for many players. Nonetheless, I tried to investigate some of the missing data to see if there was a coding reason so many of the players’ data was unavailable. I noticed that sometimes Draft Express player links include periods while other times they don’t (C.J. Watson vs. CJ Watson). However, after adjusting for this only two additional players were picked up.

# See if simplifying strings further gives more players missing <- links[which(is.na(links[[2]])),] missing <- gsub("\\.","",missing[[1]]) missing <- gsub(",","",missing) missingLinks <- str_extract(content,paste0('/profile/',missing,'-[0-9]+')) # These players were identified http://www.draftexpress.com/profile/CJ-Watson-569/ http://www.draftexpress.com/profile/Tim-Hardaway-Jr-6368/